GitHub - IndicoDataSolutions/Foxhound: Scikit learn inspired library for gpu-accelerated machine learning

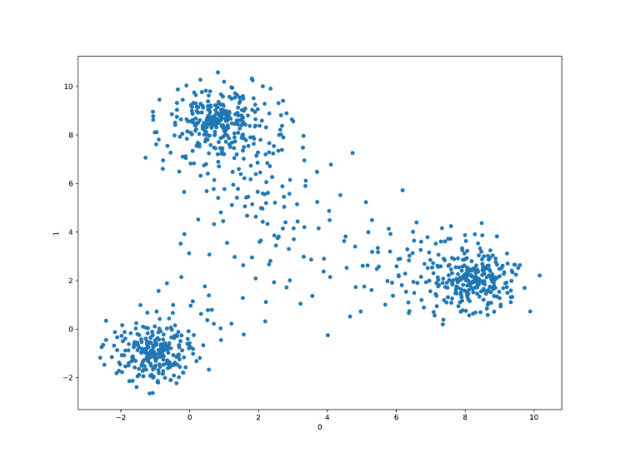

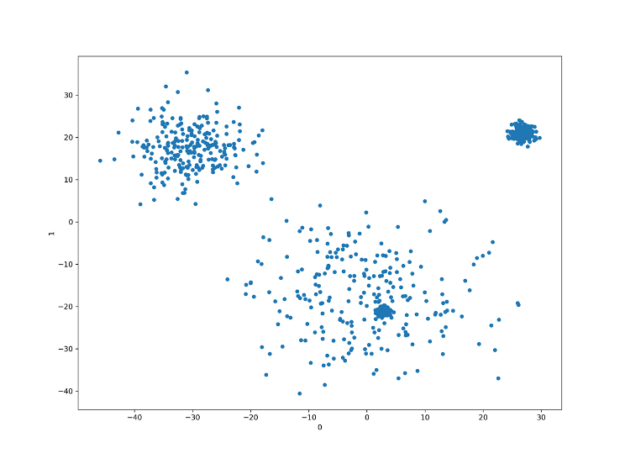

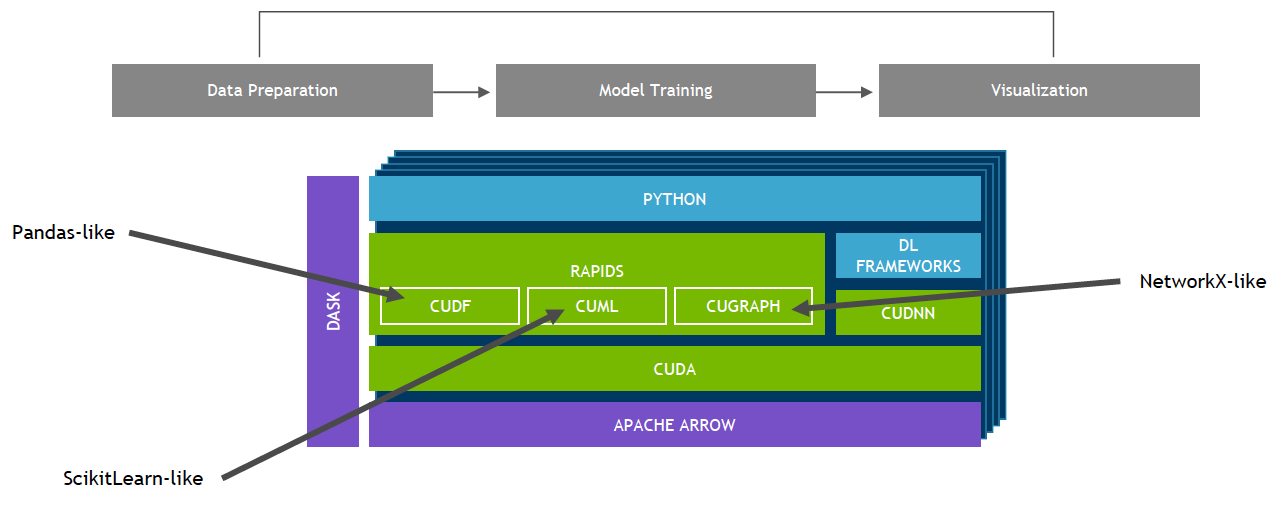

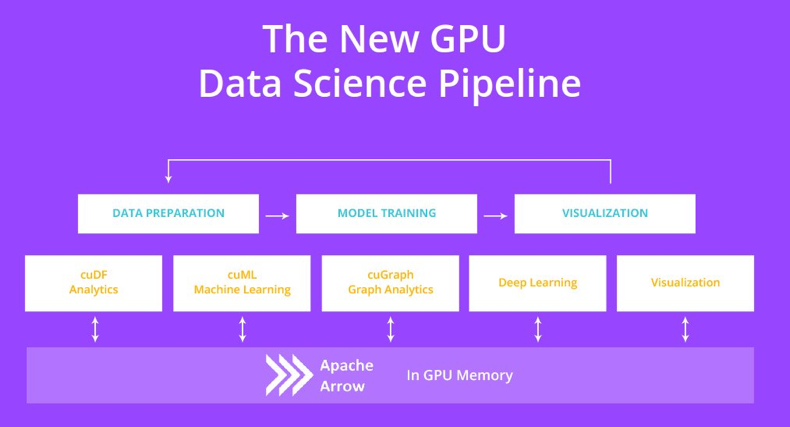

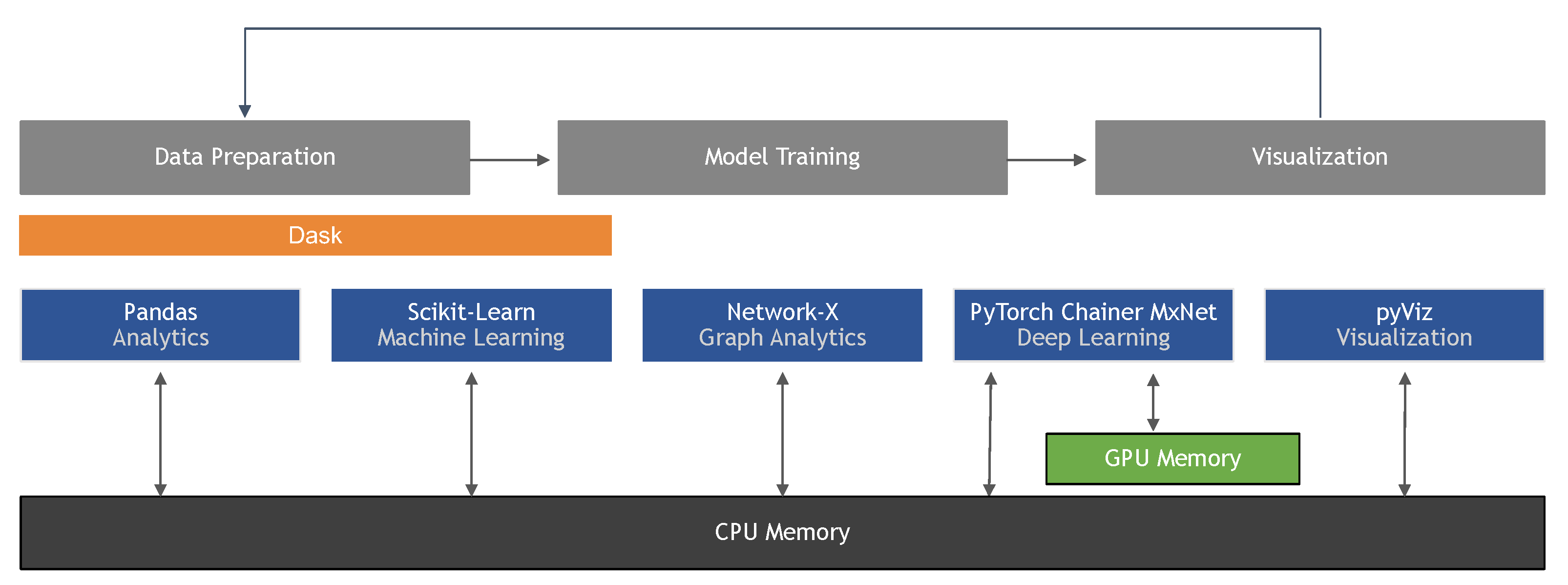

Boosting Machine Learning Workflows with GPU-Accelerated Libraries | by João Felipe Guedes | Towards Data Science

Information | Free Full-Text | Machine Learning in Python: Main Developments and Technology Trends in Data Science, Machine Learning, and Artificial Intelligence

Are there any plans for adding GPU/CUDA support for some functions? · Issue #5272 · scikit-image/scikit-image · GitHub

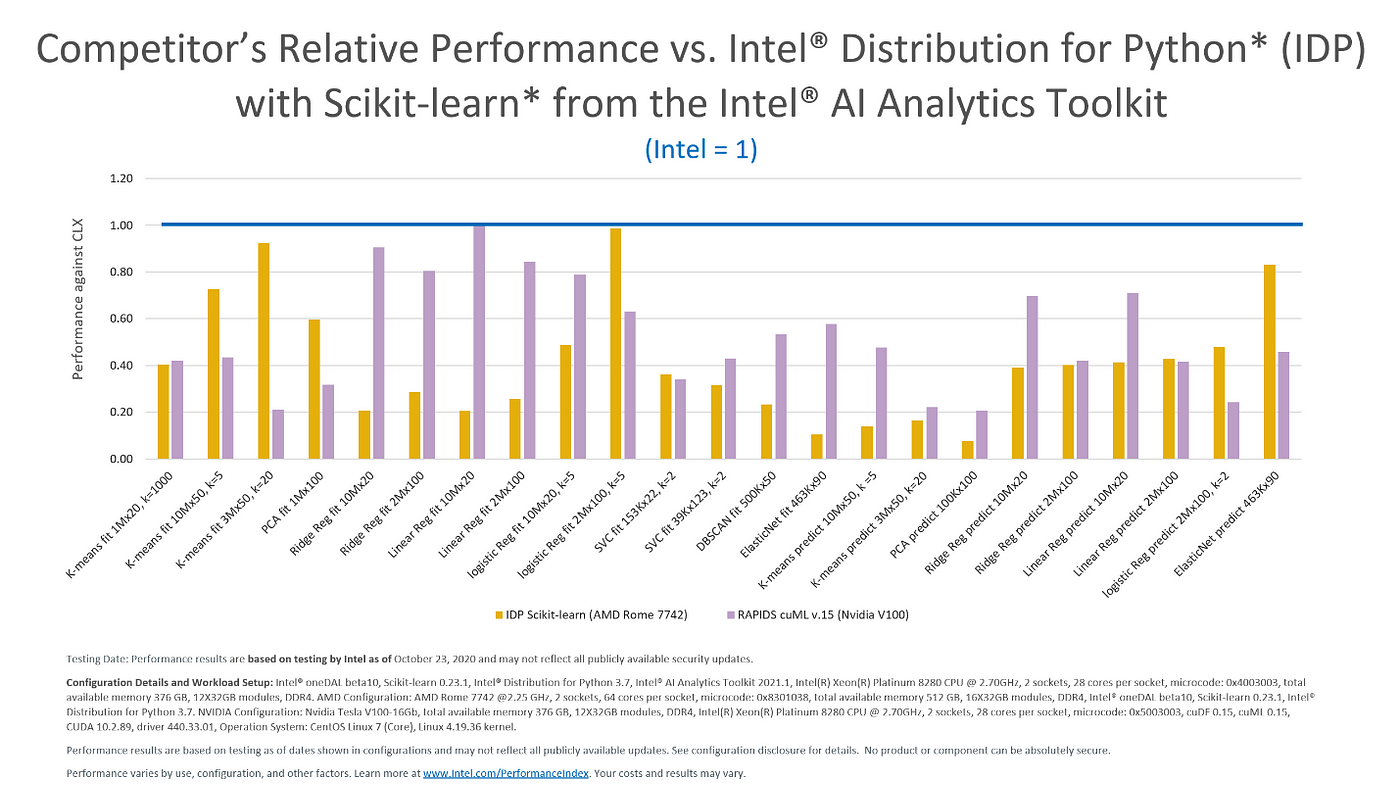

Intel Gives Scikit-Learn the Performance Boost Data Scientists Need | by Rachel Oberman | Intel Analytics Software | Medium

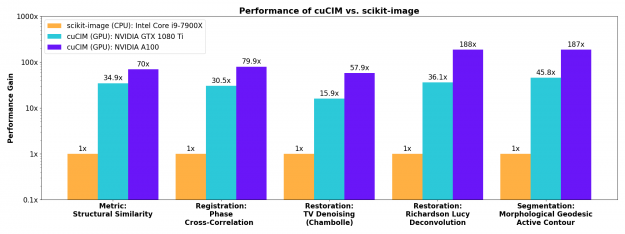

Accelerating Scikit-Image API with cuCIM: n-Dimensional Image Processing and I/O on GPUs | NVIDIA Technical Blog

Amazon.com: Python Data Analysis for Newbies: Numpy/pandas/matplotlib/scikit-learn/keras eBook : Joshua K. Cage: Kindle Store